Member-only story

Unlocking Retrieval-Augmented Generation with Clarity and Simplicity

Taking LLMs to the Next Level with Better Relevance and Factual Correctness with Retrieval-Augmented Generation(RAG)

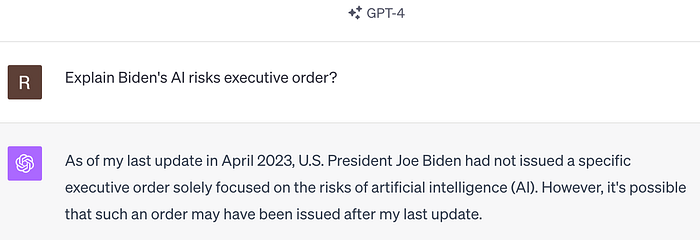

Suppose you had asked the following question to a Large Language Model like ChatGPT-4

Explain Biden’s AI risks executive order?

Since this is the latest news, LLMs may not know the answer to the question as they are not trained with the information about the topic.

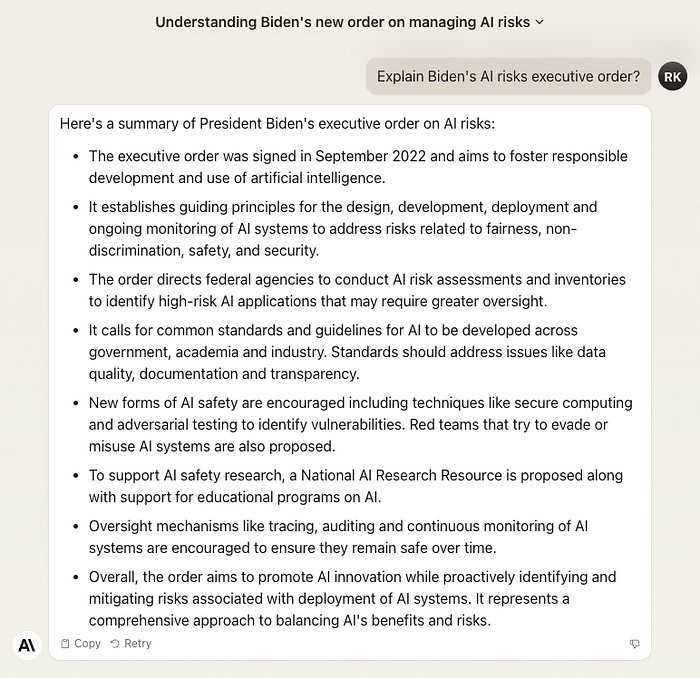

Some LLMs may respond that they don’t know the answer to the question, as shown above, or hallucinate and generate information that is not grounded in reality or factual data, as shown below.

Challenges with LLM

- Static Knowledge Base: Large Language Models (LLMs) like GPT are limited by their training data; they don’t know events or information that emerged after they were last updated. This means they cannot provide the latest information on recent developments.

- Difficulty Discerning Fact from Fiction: LLMs sometimes “hallucinate,” presenting made-up information as fact. They can do this with confidence, making it tricky to identify inaccuracies.

Now, imagine that you have an open-book exam where you can use your different resources to look up the answer to your question. This is what RAG does. RAG operates on a similar principle, utilizing external sources to enhance its responses with the most current information.

RAG stands for Retrieval Augmented Generation.

RAG is an AI framework that sources relevant information from specified external source of information — such as documents, Google searches, or Wikipedia — to answer questions. By passing the question, the relevant information retrieved from external sources, and a prompt to the Large Language…